Digitisation in the public sector

The Why Not Lab is engaged in capacity building projects across the world bridging theoretical understanding with practical workplace realities. To empower workers, Christina Colclough has developed a number of collective bargaining and policy tools and guides. Christina is a Fellow of the Royal Society of Arts in the UK, an Advisory Board member of the Carnegie Council AI and Equality Initiative, member of the OECD AI Expert Group and is affiliated to Copenhagen University.

This report presents recommendations for union action as public services and work in said services become increasingly digitised. Methodologically, the report is based on interviews with trade unions and workplace representatives conducted in late 2022 as well as desk top research.

The report starts with an introduction to the current digitisation trajectory and the interviewees’ experiences. Three sections then follow, each listing recommendations for union action. The first is concerned with the level of national policy, the second with sectoral and workplace collective bargaining and the third with training needs and structures for union officers and workplace representatives.

Whilst the introduction will be describing the wider environment surrounding the digitisation of public services and its effects on workers, the recommendations will focus exclusively on issues related to the deployment and governance of digital tools and systems.

Introduction

The 2021 UK government commitment1 to transform public services through digitisation was reaffirmed in February 2023 when Prime Minister Rishi Sunak announced a reshuffle that will see the creation of a new Department for Science, Innovation and Technology (SIT). SIT will be asked to “drive innovation that will deliver improved public services” as well as create “new and better-paid jobs.”2

Whether the PM in this statement is referring to better paid jobs in the public sector and/or the private sector is not clear. However, hints can be found in the oft-stated government ambition to make the UK a global tech hub. As recently as in January 2023 in a speech to an audience of tech representatives from the likes of Meta, Microsoft, Amazon, Apple, and Google, Chancellor Jeremy Hunt spoke of his desire to turn the country into the “world’s next Silicon Valley”.3 This indicates a public-private relation, in which public services will heavily rely on innovation from the private sector. Indeed, gross spending on public sector procurement - of which we can assume a significant sum goes to digital technologies and innovative solutions - is on the rise. It was £379 billion in 2021/22 across the UK, an increase by £24 billion or 7% between 2020/21 and 2021/22.

A general election will take place in two years making any prediction about the impact of these departmental changes uncertain. However, should the government’s trajectory continue, it seems clear that the ‘new and better paid jobs’ as referred to by the PM will unlikely be public service jobs.

Furthermore, whilst SIT distinguishes between science, innovation and technology as three separate entities, the aim to deliver ‘improved public services’ will most certainly be sought via the continued and expanded use of digital technologies. This is not a new endeavour in UK public services. For example, the scandalous Horizon system developed by Fujitsu cost £1bn excluding the £58 mn claimants have won in damages. 4 Common Platform which started to be rolled out in 2020 as an agile project has a £300mn budget. It is a key strand of the wider £1bn digital transformation of the courts system. The cost of developing Universal Credit went up from £3.2bn in 2018 to £4.6bn in 2020 and is expected to be much higher in 2026 when the delayed parts of the project are forecasted to finally be realised. Private sector company Palantir is set to bid for the 2023 contract to operate the NHS’s main data platform — the most lucrative element of the 5-year £400mn contract with a value of £360mn. 5 The Home Office’s Data Services and Analytics (DSA) has signed an initial four-year contract with PA Consulting to ‘support data and analytics. The deal came into effect on 1 December 2022 and is valued at £40m.

These digital systems are being developed to improve the efficiency of public services. Horizon was designed to replace a legacy system and is/was used for tasks such as transactions, accounting and stocktaking. Universal Credit aims to replace six benefits previously paid to people who were out of work, struggling with ill health or on a low income, simplifying the system and reducing costs. The Common Platform aims to replace five separate case-management tools currently in use with a single digital system for use across the justice system. The NHS project aims to incorporate tens of millions of personal digital medical records into one of the biggest health data platforms in the world in order to improve care and provide new insights into the nation’s health.

All have been highly criticised by the trade unions and privacy groups. In 2018, the TUC passed a motion calling on the government to ‘Stop and Scrap Universal Credit’ - believing that both the policy and design of Universal Credit are fundamentally flawed. 6 Responding to the 2021 UK Government Green Paper: “Transforming Public Procurement” GMB stages that:

“The Cabinet Office engaged with over 500 stakeholders and organisations through many hundreds of hours of discussions and consultations and workshops in producing the Green Paper. However, the fact that GMB and the wider trade union movement was not approached for input into this important exercise shows an unacceptable lack of representative views gathered in this process.”

In January 2023 trade Union PCS is escalating strike action in connection with their long-running dispute about using the failing Common Platform system. 7 CWU representing the subpostmasters is tirelessly battling for justice for the hundreds of subpostmasters who have been falsely accused of fraud and theft. 8

Campaign group openDemocrary launched legal action against the UK Government in February 2021, raising concerns about the NHS database and Palantir’s involvement beyond the pandemic. 9 In response, the UK government promised in March 2021, not to expand the role of Palantir 's NHS England database without public consultation. However, in January 2023, the NHS extended its controversial contract with Palantir by six months – and £11.5 million in value – without public consultation. 10

Efficiency at what cost?

Whilst the government believes that digital innovations will improve public service efficiency, other cost cutting measures are in play: wage freezes, the reduction of staff, offices, job centres and courts, as well as the streamlining of services, the hiring of staff on fixed term contracts and the centralisation of many functions. For a decade Civil Service workers have either been subject to a pay freeze or they have received below inflation rate pay rises capped at 2, maximum 3%. 11 Across public sector employment, 2.1 million workers earned less than £24,000 and therefore under the minimum wage. In 2022 they received a statutory pay uplift by the government. However, nearly one in ten frontline civil servants (47,000), including those working in the Department for Work and Pensions, are themselves on Universal Credit to boost their pay as the cost of living crisis drags more and more people into financial trouble.

According to PCS, civil servants have seen their living standards plummet by around 20% in real terms over the last decade. 12 Nurses have lost £42,000 in real earnings since 2008 – the equivalent of £3,000 a year. For midwives and paramedics, the losses are more than £56,000 – the equivalent of £4,000 a year13 . In the education sector, teachers and school leaders have lost around a quarter of their pay since 2010 according to separate analysis by the NEU 14 .

More than 40,000 civil servants 15 and 5,000 NHS staff, of whom 550 were nurses 16 have had to resort to food banks during the last year. One in 10 teachers now have a second or even third job because their teaching pay does not cover their monthly outgoings. 17 More than 1 in 4 (28.4%) children with care worker parents are growing up in poverty. TUC estimates that 1 in 5 (19%) key worker households have children living in poverty. 18

As one interviewee stated:

Folk can’t afford to get to work at the end of the month, so would phone in sick or take leave

In 2022, then PM Boris Johnson announced plans to cut 91,000 civil service jobs 19 - something PM Sunak later retracted, but that hasn’t stopped job losses. PCS announced in January 2023 that 668 Department for Work and Pensions (DWP) staff had lost their jobs due to back office closures. Those who remain in work are additionally threatened by automation. Unite believes that more than 230,000 of its 1.4 million members could lose their jobs to automation by 2035 with workers most at risk in health and local government.20

This begs the question: are the public services actually showing signs of increased efficiency? Whilst the government maintains that the cost of Universal Credit is far outweighed by the benefits, public service trade unions and workplace representatives that were interviewed as part of this report, spoke of worsening work environments. Across various sectors they reported of:

- High levels of staff turnover leading to excessive amounts of time spent on training new recruits and then losing them again.

- Staff turnover increased by the hiring of workers on fixed term contracts.

- Little time to train new recruits or be trained in new systems. Most training took place online via videos.

- No meaningful consultation with the workers on the new systems or tools, nor on features that the workers find faulty, difficult to understand or new features that they would like to have to ease their work.

- Faulty systems per se and the need for workers to do double filing: both through the digital systems, but also on paper to check for and/or suppress system faults. This in turn is leading to increased stress levels, longer working hours and job dissatisfaction.

- Faulty systems that require manual overrides. New recruits who have not yet learnt where the systems typically fail and what the workarounds are, are making mistakes, which increases their emotional stress and is leading to many leaving the job again.

- Huge backlogs partially due to the lack of staff, but also due to system faults and/or private vendors who do not fulfil their contractual obligations.

- Increased time spent on administrative tasks, especially as administrative workers have been made redundant as part of the cost-saving measures.

- Low work morale and emotional distress.

- Performance management pressures.

- A decline in jobs suitable for neurodivergent and/or disabled staff members (referred to by management as “low value work”) as work becomes digitised is narrowing the labour market.

- Increase in musculoskeletal conditions.

Although the interviewees agreed that the aim of the Common Platform is on paper sensible and that both it and Universal Credit as systems have improved, these lived experiences are hardly boosting public service efficiency. On the contrary, they are also negatively impacting members of the public. As one interviewee said:

The change in the last 20 years in the way that claimants are treated is awful. Claimants are being demonised. As if they are liars. They need to go over and above to prove to us they deserve this benefit. The barriers that people come across are leading to exhaustion. To actually get to a tribunal can take up to 2 years. The paperwork needed is enormous. It requires a full time job almost. 65-70% of our negative decisions are overturned at tribunal.

Another interviewee commenting on Universal Credit continued:

Applying for benefits is a huge burden on citizens: I mean, I would consider the burden to be deliberate to make these applications as difficult as possible. The forms are so complex and difficult to understand.

A third added:

[The system] is an unmitigated disaster, it is totally unfit for purpose. What I put in to it, and what arrives aren’t necessarily the same thing. Inputs get changed. People are flagged as suicidal yet they are not. The system is designed to get rid of thousands of jobs. This means legal advisors are now doing the data entry rather than the administrative assistants. This in turn means that we can’t do what we are supposed to do, advising magistrates on all things related to the law, procedure, evidence, sentencing, the duty to ensure that those who are unrepresented are assisted to present their cases etc. So citizens are harmed.

Summarising the state of affairs

Whilst most interviewees sympathise with the need to keep public services efficient, the transition to the new digital technologies is riddled with problems of a structural, organisational and political nature.

Structurally, the systems’ design process and agile rollout means that systems are taken into use before they are fully complete and checked for errors. As a result, citizens are harmed and their rights violated. For the workers, this is having detrimental effects on their rights and working conditions. Privacy rights in relation to third party access to sensitive data through the use of private sector developers and vendors is also a major concern, although not one explicitly mentioned by the interviewees.

Organisationally, the lack of transparency coupled with the top-down roll out, lack of meaningful consultation, and deficient co-design/co-governance efforts are violating workers’ dignity, rights, freedoms and autonomy.

Politically, the cost-saving aims of ‘improving’ public services are partially sought through the digitisation of public services, but also through negative pay policies, lay offs, office closures and more. In addition, the increasing reliance on private sector solutions and the lack of involvement of the workers and/or their unions in this process are posing a threat to workers’ rights and inclusive and diverse labour markets.

The next sections of this report are concerned with how unions can respond to these issues. They will present concrete recommendations to union action through national policies and collective bargaining. Some of the recommendations will be directly related to issues raised by the interviewees. Others will address issues that were not raised, but that are of importance for the union quest of ensuring quality public services and decent work in digitalised workplaces.

National Policy Recommendations

This section will focus on what national level policies unions could be pushing for in relation to the digitalisation of public services and work within these services. Where relevant, individual points will be supplemented by notes describing the motive behind the recommendation. Please note, these recommendations are supplements to existing laws, not replacements.

Procurement/Supplier Contracts

Public procurement/supplier contracts must be made transparent to members of the public and workers alike. This includes information on vendors, developers and budget frames.

Note: This demand addresses information provided by the interviewees that a freedom of information request on the developers and vendors involved was rejected on the grounds of commercial sensitivity. Members of the public and workers alike should be informed about the identities and/or categories of data controllers, and through data subject access requests have the right to their data (see recent CJEU ruling). 21

Procurement/supplier contracts must include stringent articles on as a minimum joint data access and control, including the public service’s right to demand amendments to digital systems if (un)intended harms or other system faults are detected.

Note: This policy aims to ensure that public services maintain their autonomy relative to procurement partners and/or suppliers. This is especially relevant with regards to access to the data derived through digital technologies. Without this access, public services will enter into a dependency relation where it is the private sector who analyses the data. No data analysis is objective and free from interpretation. Hence, this policy is about safeguarding democracy.

Transparency

Public services should include in the budgets for digital technologies the energy, electricity, digital infrastructure costs running the technologies as well as the depreciation costs these have on natural resources. In addition, budgets should include estimates of the human capital costs in connection with rationalisation measures including job displacements, disruptions, re-skilling programmes and more. (see for inspiration the work by Sir Partha Dasgupta on Inclusive Wealth) 22

Public services must make information available about the algorithmic systems /artificial intelligence systems they, or a third party on their behalf, are deploying. This includes guaranteeing the public’s and workers’ right to:

Have insight over the systems, their purpose, the data used.

Know what profiles (inferences) are made and for what purposes

Who else has access to the data (3rd parties, public/private)

Know what rights of redress they have.

Know who in the public service is responsible for the systems deployed and who they can turn to with questions and enquiries.

Note: Inspiration to this policy can be found in recent initiatives from Helsinki and Amsterdam 23 as well as in UK GDPR articles 13 and 14 on the Right to Be Informed.

Inclusive Governance

Developers/vendors must, in addition to legally or otherwise required data protection impact assessments and/or equality impact assessments, conduct Human Rights Impact Assessments and make these public. The deploying public service must conduct their own and make that public too. These impact assessments must be based on consultation with relevant stakeholders, including representatives of those who are subjects of the system.

Note: The Netherlands’ Fundamental Rights and Algorithms Impact Assessment (FRAIA) is a tool for public institutions considering developing or purchasing an algorithmic system. It requires them to walk through the Why (purpose), the What (data and data processing) and the How (implementation and use) of the algorithm. Importantly, it ensures that decision-makers undertake an assessment of the likely impact of the use of algorithms on specific human rights. It uses a dialogue-oriented and qualitative approach, requiring consultation with stakeholders and consideration of different trade-offs to reach a final decision on whether and how to proceed. 24

Intrusive digital technologies that violate Human Rights cannot be introduced into society.

All public service digital development projects should be overseen by an inclusive Advisory Board consisting of trade union representatives, central and local management representatives, developers, vendors and members of the public or representatives of said in equal proportions. This Board shall advise on needed features, guard rails and address issues raised in the Human Rights Impact Assessments. When the project launches, the Advisory Board will be replaced by the Governance Body (see next item).

Public services must commit to governing the digital technologies they are deploying on an ongoing basis through the establishment of a Governance Body. This Governance Body shall be inclusive, i.e. including representatives of those who are subjects of these systems (worker representatives and/or representatives of citizens concerned) as well as local and central management and representatives of those tasked to use the systems in their daily work.

Note: Inspiration for this policy can be found in the UK GDPR. Here the ICO, in line with the European Data Protection Board, recommends that data protection impact assessments regarding systems that process workers’ data, should be conducted in consultation with the employees or their representatives. The ICO states:

“If the DPIA covers the processing of personal data of existing contacts (for example, existing customers or employees), you should design a consultation process to seek the views of those particular individuals, or their representatives.” 25

- The Governance Body shall at all times log their decisions.

- The Governance Body oversees ongoing impact assessments and sets follow up plans. Any technical adaptations needed on the background of these are at all times subject to final approval by the Governing Body.

- The Governance Body shall also be mandated to handle complaints/enquiries from the public and/or staff. This includes establishing a Whistleblower system.

Note: Inspiration to the themes the Governance Body CBA address can be found in this guide developed by the Why Not Lab 26

The public authority responsible for the introduction of a new digital technology must be responsible for ensuring that management and the worker representatives in either the Advisory Board or Governance Body have the necessary skills and competencies to meaningfully fulfil their roles.

Disruption’s Obligations

The deployment of digital technologies in public services will by nature be disruptive for the workforce. This disruption must be accompanied by obligations towards the workforce impacted. Therefore, the authorities must commit to:

Offering the workforce concerned with appropriate training modules that should be taken in working time as part of work duties.

Offering displaced workers other work commitments in the public service or as a minimum a career guidance mentor coupled with training courses.

Note: These obligations should be equally deployed when disruption is caused by other cost-saving measures such as office closures, restructuring programs and the like.

Comments

The policy recommendations above address some of the workplace realities as reported by the interviewees. These include:

The lack of transparency

The lack of meaningful consultation with regards to the systems deployed and their effects on workers as well as functionality requests from the workers

The lack of sufficient training in how to use the systems

The lack of meaningful governance of the (un)intended effects of the systems on the workforce as well as the public, as well as the lack of structures to deal with these effects in an inclusive manner.

They also seek to future-proof current inter-governmental discussions on the future of AI regulation. In short, governments across the world are uniting around the idea of regulating AI through market access standards and certifications. Worryingly, none of the current discussions include requirements to govern technologies accepted into the market on an ongoing basis. 27 By immediately utilising some of the current rights as stipulated in the UK GDPR on consultation, as well as utilising fully the existing Information and Consultation Regulations,28 trade unions have an entry point to establishing the governance structures proposed in the above.

Finally, many of the policy ideas proposed will be reflected or mirrored in collective bargaining demands - to which we now turn.

Collective Bargaining Recommendations

This section is concerned with collective bargaining recommendations in connection with the digitisation of work in public services. The themes presented must be seen as a supplement to existing union demands in collective bargaining, not as a replacement hereof.

The recommendations will mostly address issues that were indirectly raised by interviewees. What this means is that the interviewees described the consequences of the use of digital systems and some of the organisational, structural and political solutions that could overcome them. They often did not, however, go one step deeper into addressing the means, and/or what could be done to overcome exactly them. For example, this interviewee spoke about the need to repress the digital system due to its faults:

Sometimes we need to lie to the system otherwise we can’t get to the next stage. We call it “workarounds”. It is much improved compared to what it was. I used to train people on how to be a case manager. One of the most difficult things was to teach them how to use the system and the workarounds.

The means here relate to poor system design and poor system purpose definitions. They are probably also caused by poor communication between developers and deployers. If the workers were meaningfully consulted on the digital systems, and if their feedback was listened to and the system amended, the need for workarounds would have disappeared and not become what seems to be rather institutionalised. What this indicates is that there is a much stronger need for inclusive governance across the entire design and deployment life cycle. This will be raised in the below and was also confirmed by an interviewee:

There is very little consultation. There are working task groups to give the impression they are listening. Middle even up to senior management they do sort of try to take our concerns back up. But the Executive are not taking us seriously.

The quotations from the interviews that were presented in the introduction of this report spoke about the harms on citizens. Again, if the digital systems were governed for (un)intended harms and outcomes, and the government had no intention of causing these harm, then surely they would have been rectified? So these concerns speak of the need for harms and benefits monitoring and strong contractual agreements between developers and deployers around the rectification of harms. Yet governance across the design and deployment lifecycle was not directly mentioned by the interviewees.

Further, one of the interviewees spoke about an equality impact assessment and said:

The Equality Impact Assessment is not worth the paper it is written on. Whilst it has looked at those who may face redeployment, it hasn’t broken that down by gender, carers, disabled etc. We think carers and disabled people are the worst impacted. Generally women tend to be sandwich carers, so… you know.

Had the unions been involved from the start, and had a human rights impact assessment been conducted as suggested in National Policy Recommendation nr 5, the impact assessment would have been much more thorough and representative.

In relation to the use of automated management systems an interviewee spoke about a performance management system that was in place:

We need to deliver volumes: our breaks are under pressure and under surveillance. We need to tick the boxes - that’s very very clear. That’s the culture they want. Under this performance management culture now, they have these behaviour criteria, so if you are too sort of disparaging about the department you will get pulled in. It's intimidating to staff, it’s not appropriate. We managed to get rid of the performance pay. They were setting arbitrary targets, so only a set number of people could get the highest pay even if they did well. Women and people with disabilities are most negatively affected by these changes. None of the automation has been done with those members in mind and the fact that they may not be able to operate the systems fast enough.

However, the interviewee did not mention anything about the data that is extracted as part of these performance management systems, and what these data are used for. This is another example of an indirect issue of interest. The recommendation on Data Protection and Rights below will offer some ideas as to how worker representatives and unions can use the UK GDPR to safeguard their collective data rights, get insights into how data is used and begin to negotiate for guardrails. The recommendation on Limiting Worker Surveillance that can be found under 'Additional Themes' below aims to support the negotiation of surveillance guardrails and redlines.

All of the recommendations address these indirect issues and others linked to them. They supplement the TUC publications Dignity At Work And The Ai Revolution - A TUC Manifesto and When AI Is The Boss, as well as the UNISON publication Bargaining over the use of new technology in the workplace | UNISON. These publications are highly recommended as some of their central recommendations will, for the sake of brevity, not be mentioned in the below. The recommendations below will also draw on work conducted with unions across the world on the digitisation of work and its impact on workers’ rights.

Introductory Remarks

The deployment of digital technologies in workplaces will have a significant impact on all aspects of work. These range from occupational health and safety, to working time, work intensity, skills and training, restructuring, hybrid work, job and task disruption and/or displacement to equal opportunities. 29

Many of these issues are covered in the key publications referenced in this report. In the following, the focus will exclusively be on 4 core themes: Guiding Principles, Transparency, Data Protection and Rights and lastly Inclusive Governance. The section will end with an overview of additional themes that beneficially could be included into collective agreements.

Guiding Principles

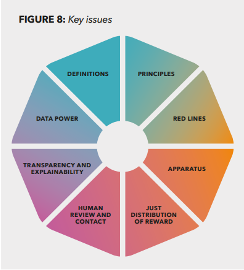

Firstly though, it is important to note the TUC’s suggestions to 8 key issues that could feature in a collective agreement (see figure and more in their report People-Powered Technology pages 23-37). 30

The key issue on “Principles”, covers suggestions to potential principles and commitments that could be agreed upon by trade unions and employers in, for example, a preamble to an agreement. Whilst these are written to address automated management systems (AMS), such as performance monitoring systems, surveillance systems or scheduling tools, they are equally applicable to systems that public service workers are required to use to perform their duties towards the public.

- 1 https://gds.blog.gov.uk/2021/04/06/the-next-steps-for-digital-data-and-technology-in-government/

- 2 https://www.gov.uk/government/news/making-government-deliver-for-the-british-people

- 3 https://www.uktech.news/news/government-and-policy/hunt-uk-silicon-valley-20230127

- 4 https://www.bbc.com/news/business-56718036

- 5 https://www.ft.com/content/a9228ce5-8662-474a-9b77-ca563cc5080a

- 6 https://www.tuc.org.uk/research-analysis/reports/replacement-universal-credit

- 7 https://www.pcs.org.uk/search?search_api_fulltext=common+platform+strik

- 8 https://committees.parliament.uk/writtenevidence/5124/html/

- 9 https://techmonitor.ai/government-computing/palantir-nhs-england-federated-data-platform-contract-award

- 10 https://www.theregister.com/2023/01/04/palantirs_covidera_uk_health_contract/

- 11 https://researchbriefings.files.parliament.uk/documents/CBP-8037/CBP-8037.pdf

- 12 https://www.globalgovernmentforum.com/uk-civil-servants-face-real-terms-pay-cut-as-unions-slam-governments-utter-contempt-for-officials/

- 13 TUC (2023) Patients overwhelmingly back striking NHS key workers – new TUC poll | TUC

- 14 https://neu.org.uk/press-releases/pay#:~:text=Teachers%20had%20already%20lost%20around,cuts%20between%202010%20and%202021.

- 15 https://www.pcs.org.uk/print/pdf/node/10013

- 16 https://www.nursingtimes.net/news/charities/charity-reports-more-than-500-nurses-using-food-banks-12-01-2023/

- 17 https://www.theguardian.com/education/2022/nov/27/one-in-10-uk-teachers-forced-to-do-second-jobs-to-keep-eating-amid-rising-costs

- 18 https://www.tuc.org.uk/news/1-4-children-care-worker-parents-are-growing-poverty

- 19 https://www.civilserviceworld.com/news/article/government-plans-to-cut-91000-civil-service-jobs

- 20 https://www.tuc.org.uk/sites/default/files/2022-01/DigitisationReport.pdf

- 21 https://www.insideprivacy.com/gdpr/gdpr-rights/court-of-justice-of-the-eu-decides-that-gdpr-right-of-access-allows-data-subjects-to-request-the-identity-of-each-data-recipient/

- 22 https://www.unep.org/news-and-stories/story/management-natural-assets-key-sustainable-development-inclusive-wealth

- 23 Floridi, Luciano, Artificial Intelligence as a Public Service: Learning from Amsterdam and Helsinki (October 18, 2020). Available at SSRN: https://ssrn.com/abstract=3827084 or http://dx.doi.org/10.2139/ssrn.3827084

- 24 https://www.government.nl/documents/reports/2021/07/31/impact-assessment-fundamental-rights-and-algorithms

- 25 https://ico.org.uk/for-organisations/guide-to-data-protection/guide-to-the-general-data-protection-regulation-gdpr/data-protection-impact-assessments-dpias/how-do-we-do-a-dpia/

- 26 https://www.thewhynotlab.com/_files/ugd/aeaf23_62a52b0671c2466e999b2064c0cdb95b.pdf

- 27 https://www.thewhynotlab.com/post/reminding-the-g7-workers-rights-are-human-rights

- 28 https://www.gov.uk/guidance/the-information-and-consultation-regulations

- 29 This 2020 report by EPSU breaks these down well: https://www.epsu.org/sites/default/files/article/files/Toolkit%20January%202021.pdf

- 30 https://www.tuc.org.uk/sites/default/files/2022-08/People-Powered_Technology_2022_Report_AW.pdf

The potential principles and commitments highlighted by the TUC are:

- Good work 31

- Respect for human rights

- Introducing Automated Management Systems (AMS) only with union consultation and agreement

- Reinvesting cost savings

- Protecting and creating jobs

- Recognising the potential positive impacts of AMS

- Ensuring equal access to benefits of AMS, e.g. training systems

- Introducing new technology to reduce working hours but not pay

- Building on existing legal rights and obligations – never falling below

- Preventing technology that benefits one group of workers over another

All of the 8 key issues include important recommendations. Whilst covered by some of the remaining 7 issues, it could be interesting to add the following 4 clauses or principles to the list of principles highlighted at the start of an agreement.

- Anti-commodification clause - that datasets that include workers’ personal data and/or personally identifiable information cannot be sold, given away or transferred to third parties without the explicit consent of the workers.

Note: The Californian data protection regulation, the CPRA, includes an anti-commodification clause as the only data protection regulation in the world. It states that workers (and consumers) have the right to opt-out of employers selling or sharing their data to third parties such as data brokers. 32

- Governance clause - in line with the National Policy recommendation listed above, all digital technologies deployed at the workplace must be inclusively and periodically governed.

- Responsibility clause - stipulating that management at all times is the responsible party and is liable for intended as well as unintended harms caused by the deployment of the digital technologies.

- Explainability clause - that all systems deployed must be explainable by management.

Note: This clause will ensure that humans always remain in control of the digital technologies deployed. If management cannot explain how a system reaches its outcomes, the system should not be used.

With these principles, some of the core requirements would be in place to ensure a responsible, fair, transparent and respectful implementation of digital technologies in workplaces. They address the frustration the interviewees felt with regards to the failed roll-out procedures of the digital systems they had to use. They also address the lack of transparency felt by some of the interviewees, as well as the lack of meaningful consultation. The below will unfold further themes ripe for collective bargaining.

Transparency

In relation to automated management systems, many workplace representatives report that they do not know which digital technologies are being deployed in their workplaces. This is not only problematic, it is also a breach of the employer’s obligations under the UK GDPR articles 13 and 14 called the Right to be Informed. 33

To improve transparency it is important to trigger these articles in the UK GDPR. In addition, the Transparency clause (number 4 in the National Policy Recommendations above) can be copied into workplace negotiations.

Data Protection and Rights

The UK Government is in the process of drafting proposals to ‘simplify’ the UK GDPR. Now is the time to make the most out of the empowering clauses in the UK GDPR These are:

Article 13 & 14: “information to be provided“

Article 5(c): “Principles of Data Processing“

Personal data shall be adequate, relevant and limited to what is necessary in relation to the purposes for which they are processed (‘data minimisation’);

Article 15: “Rights of Access (incl. data subject access request”)

Article 22: “Automated decision making” (including the right not to be subject to this)

Article 35: “Data Protection Impact Assessments (DPIA)34 “

Must be carried out if processing is “high risk” – all processing of worker’s data is!

Article 29 Working Party Opinion: employers should consult with a “representative sample of employees” when conducting these.

Informed consent is not a legal basis for processing data at work

Article 29 Working Party Opinion 2/2017 on data processing at work

Article 80: “Representation”

in effect giving unions the right to represent their members in claiming their GDPR rights.

Article 88: “Processing in the context of employment”

This article says that more specific rules to ensure the protection of the rights and freedoms in respect of the processing of employees’ (workers) personal data in the employment context can be agreed by law or by collective agreements

Enacting these rights will give trade unions and their members invaluable protections with regards to what data employers can collect, how they can process them, what obligations management has to inform the workers and how third parties can or cannot use the data they have from workers. They also give trade unions the right to represent their members in (certain) data protection questions.

An important element to negotiate here is also the suggested Anti-commodification clause mentioned above under Guiding Principles.

Whilst data protection concerns were not raised by the interviewees, it was noted during one of the interviews that a freedom of information request concerning who the developers/vendors of the digital system the workers were required to use had been rejected. In connection with UK GDPR article 15 Subject Access Requests, the employer would be obliged to provide this information according to a recent ruling in the European Court of Justice. 35

Inclusive Governance

One of the TUC'S AI Manifesto demands is related to union consultation and agreement as a condition for the introduction of new technologies. This recommendation expands on that principle to cover the ongoing governance of technologies.

As such, this recommendation is in line with National Policy Recommendation number 8 on Inclusive Governance and can be copied directly from there.

Why is this recommendation important? Many digital technologies - especially those built on Machine Learning or Deep Learning - are self-learning systems. They learn how to solve a particular problem or task without having received instructions from a human. This also means that these systems can learn to do things wrongly, or in violation of the law. For example, Amazon’s automated hiring system had to be stopped as it only hired men. Based on historical data, it learnt to view female workers as an anomaly and they were therefore rejected. 36 The system was therefore discriminative.

Many of the guiding principles above as well as the demand for inclusive governance will require management to be willing to deploy digital technologies in respect of workers’ and citizens’ fundamental rights, freedoms and autonomy. Judging by the reports from the interviewees, this is currently far from the case. To begin a conversation with management, the Why Not Lab has developed a Co-Governance of Algorithmic Systems Guide.37

- 31 In the TUC publication PEOPLE-POWERED TECHNOLOGY, ‘good work’ is described as: sensible limits on hours of work and fair levels of pay, fair and just productivity measures, respect and dignity at work, respect for human autonomy, respect for human agency above technological control.

- 32 See section 8 here: https://www.caprivacy.org/cpra-text-with-ccpa-changes/

- 33 https://ico.org.uk/for-organisations/guide-to-data-protection/guide-to-the-general-data-protection-regulation-gdpr/individual-rights/right-to-be-informed/

- 34 See Prospect’s helpful guide: https://prospect.org.uk/about/data-protection-impact-assessments-a-union-guide/

- 35 https://www.insideprivacy.com/gdpr/gdpr-rights/court-of-justice-of-the-eu-decides-that-gdpr-right-of-access-allows-data-subjects-to-request-the-identity-of-each-data-recipient

- 36 https://www.bbc.com/news/technology-45809919

- 37 https://www.thewhynotlab.com/_files/ugd/aeaf23_62a52b0671c2466e999b2064c0cdb95b.pdf

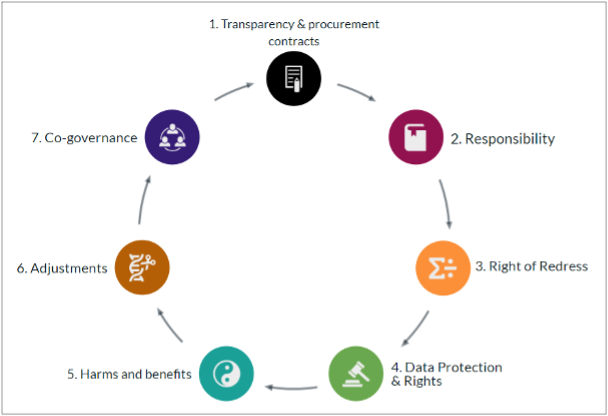

It covers the 7 themes shown in the figure above. In total, it includes 19 questions workers can ask management to begin a co-governance dialogue (see the questions in Annex 1).

In prior cases where workplace representatives have used the guide, management has at first been hesitant with their responses. This is most likely because they did not immediately know the answers to all of the questions. That is fine - the guide is aimed at capacity building, and at a gradual process of holding management accountable and responsible for the digital systems they are deploying.

The guide will provide union representatives with some of the key information they need to negotiate sound clauses into collective agreements. These clauses can be modelled using the questions in the guide. For example, under theme 1 the question: "Who designed and owns these systems? Who are the developers and vendors?" could be reframed to:

"Management shall prior to the deployment of digital technologies provide all workers with information about developers and/or vendors that have access to workers' personal data and/or personally identifiable information."

This clause will ensure that the recent CJEU ruling 38 mentioned in the introduction of this report will be respected.

Another example could be the question under theme 3: Right of Redress. It asks: “What mechanisms can be established to ensure that workers have the right to challenge actions and decisions taken by management that are assisted by algorithms?” This could be reframed to:

“Workers have at all times the right to seek redress of any decisions made by management that are assisted by algorithms. Exercising this right must not have any negative repercussions for the worker”

Additional Themes

As mentioned in the beginning of this section, the deployment of digital technologies will affect all aspects of work. The global union for public services, PSI, has just launched a digital bargaining hub related to the digitisation of work. It is a searchable, open database of contract language organised into 7 themes. It will be helpful for bargaining officers as they embark on negotiating digital clauses.

In addition to the key themes mentioned above, here are a few suggestions to further themes that are well suited for collective bargaining:

Management and workplace representatives’ digital competencies.

The right to training/lifelong learning. This includes mandatory requirements to support displaced workers. See National Policy Recommendation “Disruption’s Obligations” above and the 2022 TUC Report Opportunities and threats to the public sector from digitisation.39

Limitations to employers surveillance of workers, including outside of working hours via apps on private devices.

Union’s rights to organise remote or hybrid workers

Comments

This section has highlighted core themes related to the deployment of digital technologies in public services, and the impact these have on workers. In particular, the section has focussed on themes that were not directly addressed by the interviewees, but that go to the core of many of the challenges they face: performance management (and the data created from this), lack of transparency, lack of meaningful consultation and therefore a feeling of being non-valued as well as the changes in work leading to displaced workers and a rise in precarious contracts.

The interviewees expressed that they felt their managers had little say over the purposes, nature and development of the systems. This speaks to a strong degree of centralisation of decision-making power. Interestingly though, the union demand for sectoral collective bargaining, which in itself is a form of centralisation, has not been met by the employers.

But if the local managers do not have sufficient understanding or influence over the systems deployed, and the workers do not either, the experiences, knowledge, routines and know-how they have will ultimately be lost in the development process - especially in the agile projects mentioned in the introduction of this report. Centralisation coupled with the lack of transparency and meaningful consultation are most certainly some of the key reasons why these digital systems are failing and workers as well as citizens are harmed. It does beg the question how efficient these efficiency seeking systems really are.

It is clear from the two sets of recommendations above that to get this right, workplace representatives and union representatives need to be trained on the ins and outs of digital technologies so they can set the demands they need in policy and in collective bargaining. The last set of recommendations deals with the issue of training.

Further Reading/Union Tools

- CHAPTER XV of 2021 Spanish Collective Agreement of the banking sector, available here: https://www.boe.es/diario_boe/txt.php?id=BOE-A-2021-5003

- EPSU 2021: Responding to the challenges of digitalisation - A toolkit for trade unions

Training Recommendations

To negotiate for national policy changes and/or collective agreements, and to monitor the deployment and effects of digital technologies effectively, unions and workplace reps must have the necessary know-how and know-what. This last set of recommendations will be on the contents of basic and advanced level training schemes and on what expertise the unions need to have access to. The recommendations are not concerned with the obligations managers should have to train workers in the new systems deployed. This is covered in Disruption’s Obligations.

Introductory Remarks

Some of the recommendations for national policies and collective bargaining above require that the unions and workplace representatives can set demands prior to the deployment of digital technologies in workplaces. Others require that they can continuously monitor how these technologies are performing and affecting various groups of workers (but indeed also members of the public) and then set in with demands to management.

To do this, there is a need to have a strong foundational knowledge on the ins and outs of digital technologies. In the below, the contents of this foundational training course will be listed and then followed by the contents of an advanced training course. What is clear from experiences from other countries is that the complexity of issues at hand will require that workplace representatives have access to expertise in the union. Some unions are even discussing creating a whole new category of workplace reps called Digital Representatives. Like Safety Representatives, the digital reps should have the legal right to:

- Represent workers in discussions with the employer on the impacts of digital technologies on mental/physical health, fundamental rights, working time, work organisation and more

- Represent workers in discussions with authorities

- Investigate complaints; carry out inspections of the workplace and inspect relevant documents;

- Be paid for time spent on carrying out their functions, and to undergo training.

Foundational Training Course

This training should cover topics such as:

- Why digital technologies are different from analogue ancestors, and why union action is required.

- What is data - where it comes from and how it is used

- Inside the hood: what are the building blocks of digital technologies? Getting to know algorithms, artificial intelligence, machine learning, deep learning.

- Understanding where positive and negative outcomes can be produced.

- Data protection regulation - benefits/disadvantages for workers

- Other relevant legal rights

Advanced Training Course

Building on the foundational course, this more advanced course should cover the following:

Identifying typical algorithmic and data-generating systems deployed at work in public services: From recruitment to training, performance monitoring, line management, tracking workers, discipline and dismissal to monitoring union activities.

How to map digital technologies at work, including:

- Using legal rights to understand what information management is obliged to provide you with

- Finding additional information for example through internet searches, network knowledge such as developers/deployers, country of origin

- Charting responsible managers

- What is the system being used for, and where in the organisation

- Charting where these systems can threaten/support the interests of workers, unions, and if relevant members of the public.

- Mapping data across the data lifecycle. How it's collected, analysed, stored, and what subsequently happens to it. Is it sold, transferred, or deleted? See the Why Not Lab’s Data Life Cycle at Work guide40 to support this.

- Critical understanding of audits and impact assessments, including human rights impact assessments.

- Defining collective bargaining demands and using tools available to find inspiration from other unions who have negotiated on similar issues

- Governing technologies - what to look out for, how to hold management accountable and liable. Using the co-governance wheel in practice.

Union Experts

In addition, trade union officers should be available to support the activities the reps need to do to monitor and influence digital techs at work. This includes charting negotiated contract language, legal support and policy support.

Unions could also beneficially look into how unions can utilise responsibly sourced data for campaigning and organising efforts. This requires that the union has access to - in-house or external - data analysts and storytellers.

Public Service International has created a guide for unions to map their digital readiness: Digital Impact Framework41 . Using this guide can help unions tap into the beneficials of digital technologies in a privacy preserving, responsible way.

Comments

Whilst the training proposed might seem daunting at first, it is not dissimilar to having to learn about safety rules and regulations, mapping these and addressing negativities with management. Across the world, unions are embarking on this training and tools and guides are being created to support these efforts. One thing is clear, as the world of work becomes increasingly digitised, unions need to equip themselves with the know-how and know-what to ensure and protect workers’ rights.

End Reflections

This report has presented some key recommendations to unions so they can continue to safeguard workers’ rights in digitised workplaces. Ranging from themes and clauses on a national policy level, to new collective bargaining demands and lastly to union training programmes, the recommendations must be seen as suggestive and not conclusive. They all aim to provide responses to the structural, organisational and political problems identified in the introduction.

Structurally, they aim to give trade unions influence over (a) what the system should and should not do, (b) what the contractual arrangements between developers and deployers are with regards to workers’ data, and (c) how they are rolled out.

Organisationally, they will ensure informed and meaningful consultation as well as importantly institutionalised co-governance structures. This will be pertinent for identifying and amending (un)intended harms.

Politically, they aim to ensure decent work and a more equal balance of power in the workplace. They will protect the rights, freedoms and autonomy of the workers and stop the hollowing out of labour standards.

The input and thoughts from the interviewees have been invaluable - they have not only given the union perspective on the massive changes public service workers are experiencing, they also helped identify issues that require specific attention. Whilst many of the recommendations build on problems identified by the interviewees, some reflect issues they did not raise, but that have significant impact on workers’ rights.

This mirrors lessons learnt from unions across the world: We don’t know what we don’t know. Translated into digitised workplaces, what we don’t know is how digital systems affect workers, produce bias and discrimination and perpetuate inequalities and what is needed to put safeguards in place to prevent this.

The challenges are many, and the themes raised in the above will demand new areas of responsibility for the unions. As the guardians of decent work, the unions have a key role to play in reshaping the use of digital technologies so workers’ rights, freedoms and autonomy are respected.

For public service unions, this endeavour will additionally be about safeguarding quality public services as more and more services are privatised and new digital technologies are developed by third parties. This creates a whole new dynamic with muddled responsibilities between developers and deployers and a changing balance of power between all involved.

Annex 1: Co-governance guide questions

|

Transparency & Procurement |

1. Which digital systems is the employer using that affect workers and their working conditions? What are the purposes of these systems? |

|

2. Who designed and owns these systems? Who are the developers and vendors? |

|

|

3. What are the contractual arrangements between developer, vendor and the employer with regards to data access and control as well as system monitoring, maintenance, and redesign? |

|

|

4. What transparency measures can be established to ensure disclosure of any algorithms being used in the digital system? |

|

|

Responsibility |

5. What oversight mechanisms does management have in place? Who is involved? |

|

6. What remedies are in place if a system fails its objectives, harms workers, and/or if management fails to govern the digital system? |

|

|

7. How do you ensure the system is in compliance with existing laws? |

|

|

8. Which managers are accountable and responsible for these systems? |

|

|

Right of Redress |

9. What mechanisms can be established to ensure that workers have the right to challenge actions and decisions taken by management that are assisted by algorithms? |

|

Data protection and rights |

10. If personal data and personally identifiable information are processed in these systems, what protections for that data currently exist? What additional protections are needed? |

|

11. Are datasets that include workers’ personal data and personally identifiable information sold or moved outside the company? |

|

|

12. What mechanisms can be established to ensure workers have the right to access and correct personal data and personally identifiable information? |

|

|

Harms and benefits |

13. What assessments have you and/or a third party made of risks and impacts (positive as negative) on workers’ wellbeing and working conditions? |

|

14. How do you control for and monitor possible worker harms in these systems, e.g., health and safety, discrimination and bias, work intensification, deskilling? |

|

|

15. What is your plan for periodically reassessing the systems for unintended effects/impacts? |

|

|

Adjustments |

16. What are the mechanisms and procedures for amending the digital systems? |

|

17. How will you log your assessments and adjustments? |

|

|

Co-governance |

18. What mechanisms can you put in place, so you are party to this governance? |

|

19. What skills and competencies do management and workers need to implement, govern, and assess the digital systems responsibly and knowledgeably? |

- 38 https://curia.europa.eu/juris/document/document.jsf;jsessionid=175631AB0AA60F23E58A5E588C103DD0?text=&docid=269146&pageIndex=0&doclang=en&mode=req&dir=&occ=first&part=1&cid=76292

- 39 https://www.tuc.org.uk/research-analysis/reports/opportunities-and-threats-public-sector-digitisation

- 40 https://www.thewhynotlab.com/_files/ugd/aeaf23_d17c99e088564ccdad0554d0057302ff.pdf

- 41 https://dif.publicservices.international/

Stay Updated

Want to hear about our latest news and blogs?

Sign up now to get it straight to your inbox